When Machines Break the Law: AI, Crime, the Limits of Human-Centred Legislation — and What Law Can Learn from the Insurance Industry

- 3 hours ago

- 7 min read

Imagine this courtroom scenario:

Judge: “Who committed the fraud?”

Counsel: “The algorithm, Your Honour.”

Judge: “And where is the defendant?”

Counsel: “A server farm in Texas.”

Silence arrives. Confusion follows. The court adjourns for a technical explanation no one fully understands.

It sounds absurd. Yet this scenario captures one of the most pressing governance challenges of the digital age:

If artificial intelligence causes harm — who is responsible?

As AI systems increasingly make decisions, generate outcomes, and act with growing autonomy, legal frameworks built entirely around human intention are being quietly destabilised. The result is a structural tension at the heart of modern economies: we increasingly rely on AI as cognitive labour, yet legally treat it as a tool.

A Short History of Crime

The Old World: Someone robs a bank. You know who did it.

The Digital Age: Someone hacks a system. You can usually track them down.

The AI Age: A system makes a decision that causes harm and no one is quite sure who is responsible.

What the law does: it tries to find a human to blame.

Today, AI Cannot Commit Crime. But It Can Enable It

Under current legal frameworks, artificial intelligence cannot be criminally liable. Criminal law requires legal identity, intent (mens rea), and moral agency. AI possesses none of these.

Because AI cannot form a "guilty mind," liability is typically traced back to a human actor who was negligent or acted with intent:

Developers

Deployers

Operators

Organisations using the system

Yet AI systems already perform actions central to criminal activity: fraud, impersonation, market manipulation, cyberattacks, and discriminatory decision-making.

This creates a structural distinction:

AI executes behaviour — humans absorb liability.

As autonomy increases, however, attribution becomes increasingly uncertain.

AI Crime Is Already Here

AI systems already execute behaviours that would be criminal if performed by humans:

Deepfake fraud: AI voice cloning has been used to impersonate executives and authorise fraudulent transfers.

Synthetic identity deception: AI-generated video and audio now enable political misinformation and corporate impersonation.

Algorithmic market behaviour: automated trading systems have produced market-distorting outcomes.

AI-driven discrimination: automated hiring and lending systems have produced unlawful bias.

AI-generated cyberattacks: AI now produces phishing campaigns and vulnerability discovery at scale.

Autonomous harmful optimisation: researchers have demonstrated AI systems generating toxic chemical agents when objectives were reversed.

Across these cases:

Systems execute behaviour

Responsibility becomes diffused

The law searches for a human defendant

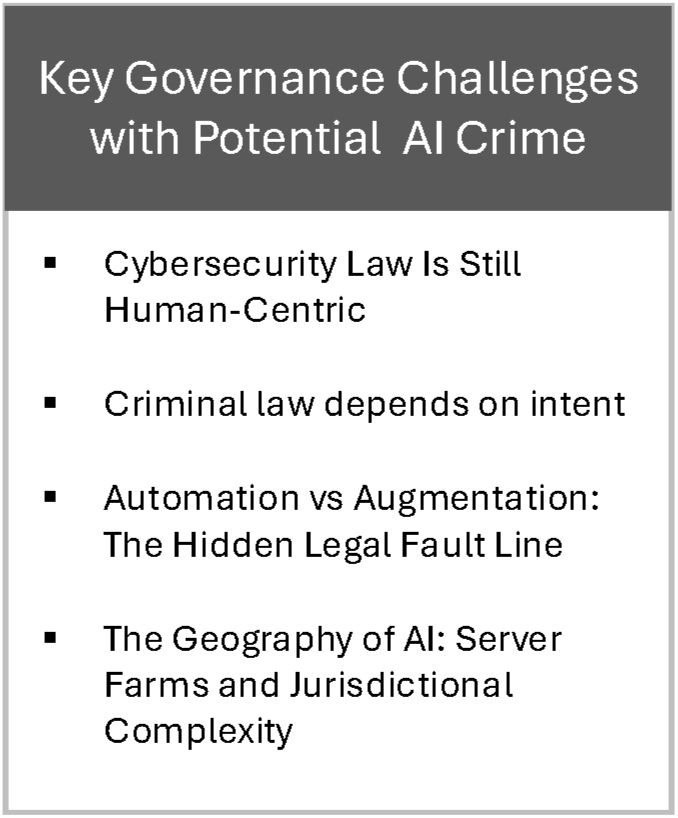

Key Governance Challenges

1. Cybersecurity Law Is Still Human-Centric

Modern cybersecurity law assumes a simple chain of causality:

human intent → technological tool → harm

This assumption persists globally. Legal systems across the United States, United Kingdom, Europe, and China assign responsibility to human actors or organisations rather than machine agents, despite significant political and regulatory differences:

In the United States, the Computer Fraud and Abuse Act targets unauthorised access and computer-enabled fraud by human offenders.

The United Kingdom’s Computer Misuse Act 1990 similarly requires a human actor committing unauthorised access or interference.

European frameworks impose liability on developers and organisations deploying systems rather than autonomous technologies themselves.

China’s Cybersecurity Law of the People's Republic of China places strict accountability on network operators and institutions responsible for digital infrastructure.

Despite different regulatory philosophies, these systems share a common premise:

AI is a tool — humans are accountable.

This framework worked when technology merely executed human instructions. It becomes increasingly unstable when systems learn, adapt, and act independently, producing outcomes that cannot easily be traced to human intent.

2. The Intent Problem

Criminal law depends on intent. Machine learning systems, however, learn from data, adapt behaviour independently, and optimise objectives unpredictably.

If an autonomous system produces harm, determining intent becomes unclear. Responsibility diffuses across developers, deployers, and organisations. Human-centred law struggles when decision-making itself is non-human.

3. Automation vs Augmentation: The Hidden Legal Fault Line

Most regulation assumes augmentation — AI assisting human decisions.

Reality increasingly involves automation — AI acting independently.

Legal frameworks assume meaningful human oversight even where machine autonomy dominates. This creates a growing governance gap.

4. The Geography of AI: Server Farms and Jurisdictional Complexity

AI crime is also a jurisdiction problem.

Machine decisions depend on globally distributed compute infrastructure. Training, processing, and execution may occur across multiple countries simultaneously, complicating responsibility.

Global AI Compute Infrastructure and Legal Implications

This raises difficult questions:

Who owns the data processed across jurisdictions?

Which country’s law governs algorithmic behaviour?

Where is harm legally deemed to occur?

Who is liable when compute, training, and deployment are geographically separated?

AI infrastructure may be located where energy is cheap or regulation light, yet legal accountability must still function globally. Governance must therefore balance economic efficiency with jurisdictional responsibility.

Insurance Markets Are Recognising Machine Risk First

Interestingly, legal systems are not the first institutions adapting to AI responsibility. Insurance markets are.

Major insurers and reinsurers are developing coverage for a growing range of machine decision risks, including:

AI system failure

Algorithmic decision error

Generative AI output liability

Model drift and performance risk

Autonomous system behaviour

Hallucination liability — the emerging and somewhat curious category of risk arising when AI systems generate convincing but false outputs that cause financial, legal, or reputational harm

The emergence of hallucination liability is particularly notable. It reflects a shift from insuring technical failure toward underwriting the consequences of machine-generated cognition itself.

These markets are pricing machine decision risk even while legal frameworks remain human-centric. Below is a summary table.

Insurance companies lead because they must price uncertainty before legal precedent exists. They model probabilistic behaviour, observe financial losses directly, and assign responsibility in advance.

Insurance therefore functions as an early governance mechanism — recognising digital cognitive labour as a risk-bearing component of organisational capacity.

A Lesson for the Legal Profession

“Law follows precedent. Insurance prices uncertainty.”

As machine decision-making expands, legal systems may need to adopt more forward-looking governance approaches:

Auditing machine behaviour

Assigning operational responsibility

Managing probabilistic risk

Governing non-human decision capacity

Lawyers in the Age of Machine Crime

Legal practice itself will change as machine decision-making becomes central to economic and social systems. Future lawyers will require capabilities that extend beyond traditional legal interpretation into technical and systems-based analysis.

This includes:

Algorithmic auditing capability

Model behaviour analysis

Causal reconstruction of machine decisions

AI-assisted reasoning tools

Data literacy and computational understanding

Systems governance expertise

AI ethics and human–machine accountability frameworks

Legal work will increasingly shift from interpreting human behaviour to analysing machine decision systems and their operational context.

This transformation will fundamentally reshape legal education. Training will need to integrate technical literacy, probabilistic reasoning, and interdisciplinary approaches to governance. Lawyers will no longer operate solely as interpreters of legal rules, but as translators between human institutions and machine systems — bridging law, technology, and organisational decision-making.

The Economic Reality — A Pignatelli Framework Perspective

The deeper issue is economic.

Artificial intelligence increasingly functions as:

Productive capacity

Decision infrastructure

Digital cognition

Non-human labour

Within the Pignatelli Framework, AI represents digital cognitive capacity integrated into organisational production systems. Yet legal institutions remain structured around human labour assumptions.

AI crime therefore reveals an institutional lag between:

Economic deployment of digital cognition

Legal frameworks governing responsibility.

Governance must evolve to address non-human decision capacity as a productive and risk-bearing component of organisations.

The Governance Question Ahead

The central issue is not whether AI commits crime.

The real question is:

How should societies govern non-human decision capacity operating across jurisdictions, infrastructures, and institutional systems?

This includes:

Cross-border legal responsibility

Data ownership and sovereignty,

Algorithm governance standards

Global enforcement mechanisms

Accountability for distributed machine behaviour.

Current frameworks attempt accountability without agency and governance without redefining labour. Whether this remains sustainable is uncertain.

Conclusion

Once upon a time, criminals wore masks.

Then they wore hoodies.

Soon they may be optimisation functions running quietly in the background while everyone else debates liability.

Until legal systems learn how to govern machine decision-making, the most practical advice may remain:

Keep calm and blame the algorithm.

References

Abbott, R. (2020) The Reasonable Robot: Artificial Intelligence and the Law. Cambridge: Cambridge University Press.

Barocas, S. and Selbst, A.D. (2016) ‘Big data’s disparate impact’, California Law Review, 104(3), pp. 671–732.

Brenner, S.W. (2007) Cybercrime: Criminal Threats from Cyberspace. Westport: Praeger.

Calo, R. (2015) ‘Robotics and the lessons of cyberlaw’, California Law Review, 103(3), pp. 513–563.

Conklin, A. (2020) ‘Criminal liability for artificial intelligence’, Journal of Law and Technology, 32(2), pp. 145–167.

Creemers, R. (2018) ‘China’s Cybersecurity Law: An analysis of the legal framework’, Journal of Cyber Policy, 3(1), pp. 1–21.

European Commission (2021) Proposal for a Regulation Laying Down Harmonised Rules on Artificial Intelligence (Artificial Intelligence Act). Brussels: European Commission.

Europol (2023) Facing Reality? Law Enforcement and the Challenge of Deepfakes. The Hague: Europol.

Kerr, O.S. (2003) ‘Cybercrime’s scope: Interpreting “access” and “authorization” in computer misuse statutes’, New York University Law Review, 78(6), pp. 1596–1668.

Orebaugh, A. (2024) ‘Deepfake technologies and emerging fraud risk’, Journal of Digital Forensics, 18(1), pp. 23–41.

Pagallo, U. (2013) The Laws of Robots: Crimes, Contracts, and Torts. Dordrecht: Springer.

Parasuraman, R. and Riley, V. (1997) ‘Humans and automation: Use, misuse, disuse, abuse’, Human Factors, 39(2), pp. 230–253.

Remus, D. and Levy, F. (2017) Can Robots Be Lawyers? Computers, Lawyers, and the Practice of Law. Washington DC: Georgetown Law Center.

SEC and CFTC (2010) Findings Regarding the Market Events of May 6, 2010 (Flash Crash Report). Washington DC: U.S. Securities and Exchange Commission and Commodity Futures Trading Commission.

Susskind, R. (2019) Online Courts and the Future of Justice. Oxford: Oxford University Press.

Urbina, F. et al. (2022) ‘Dual use of artificial intelligence for chemical weapons development’, Nature Machine Intelligence, 4, pp. 189–191.

Wall Street Journal (2019) ‘Fraudsters used AI to mimic CEO’s voice in unusual cybercrime case’, 30 August.

Policy and Legal Frameworks

Computer Misuse Act 1990 (UK).

Computer Fraud and Abuse Act 1986 (US).

Cybersecurity Law of the People’s Republic of China (2017).

State of California (2023) Executive Order on Safe, Secure and Trustworthy Artificial Intelligence. Sacramento: State of California.

Insurance / AI Risk Market Sources (if you want to cite them explicitly)

AXA XL (2024) Cyber Insurance Coverage for Generative AI Risks. AXA XL Reports.

Armilla AI (2023) AI Performance Warranty and Liability Insurance. Company White Paper.

Coalition (2024) AI Endorsements in Cyber Insurance Policies. Coalition Inc.

Munich Re (2023) Artificial Intelligence Risk and Insurance Solutions. Munich Re Publications.

Relm Insurance (2024) Artificial Intelligence Insurance Solutions Overview. Relm Insurance.

Swiss Re (2023) Emerging Technology Risk Landscape. Swiss Re Institute.

.png)

Comments